The technology that’s required to guide and pilot autonomous (self-driving) vehicles—keeping them on the road and on their route—is only one challenge.

The even greater challenge is that on city streets, for the foreseeable future, autonomous vehicles will be mixing on the roadways, not just with normal piloted vehicles but with pedestrians, bicyclists, and those on motorcycles and mopeds.

CHECK OUT: This Is How Expensive It Is To Own A Bugatti Veyron: Video

Identifying pedestrians and other vehicles, visually and perhaps with the help of their smartphones, is part of the challenge. But it’s what happens with this traffic mix that will have a major role in how quickly autonomous vehicles catch on, and whether they’re seen as a harmonious, accident-reducing net positive for society, or just mercurial bots that could inflict injury or death.

An autonomous message...with Leaf hints

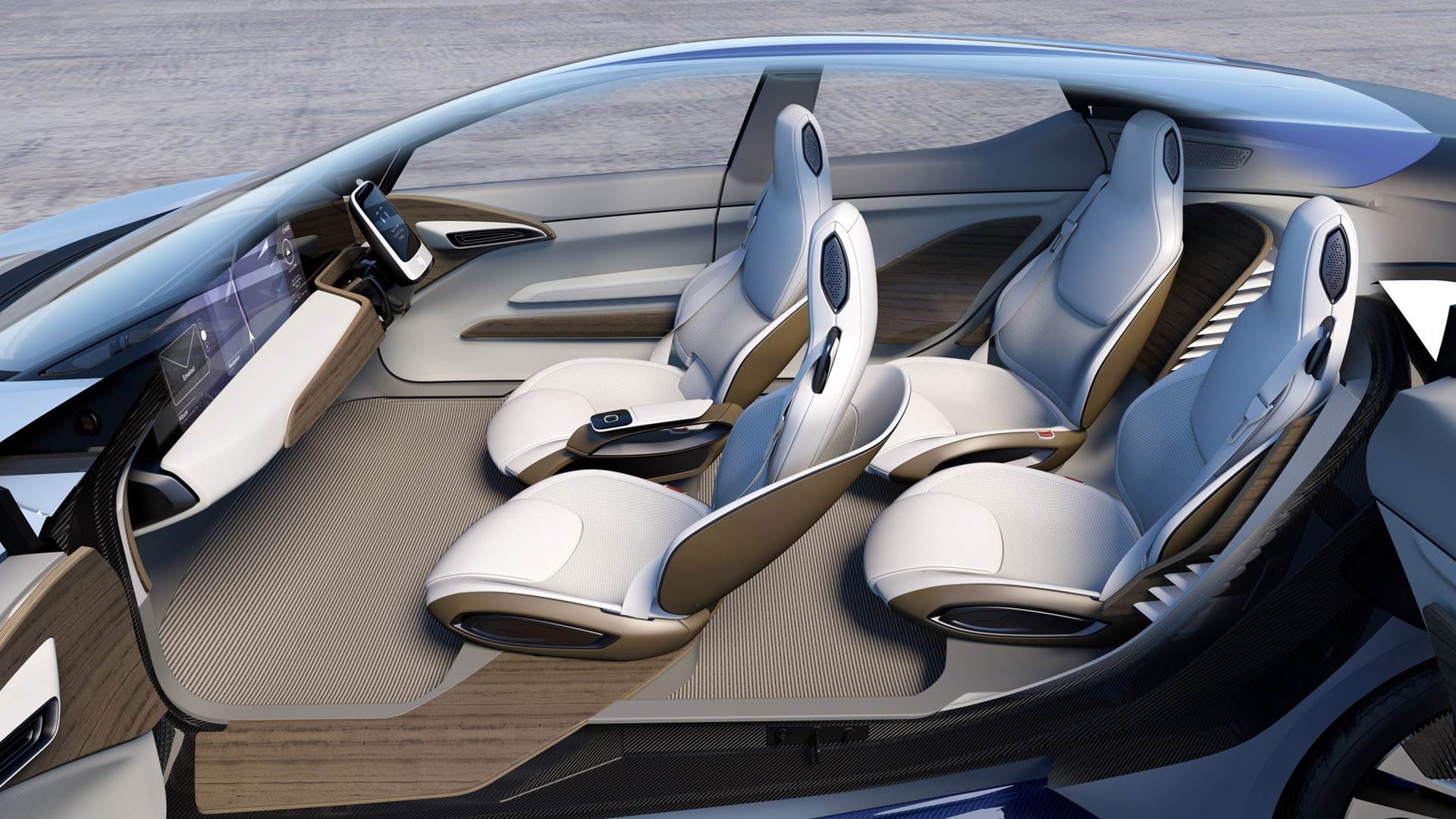

Giving autonomous cars the right social cues is an important theme and concern for Nissan’s concept car from the Tokyo Motor Show. The IDS Concept may give hints of the direction of the next Nissan Leaf; and it might be a formal nod that Nissan is seriously considering inductive charging and a much larger battery pack. But some of the most important themes in the IDS might be how it gets us thinking about how an autonomous car will need to communicate with the environment around it.

As announced at the Tokyo show, Nissan aims to provide Piloted Drive 1.0 (essentially hands-off smart cruise control for the highway) beginning in 2016 in Japan, with other markets including the U.S. soon to follow, while Piloted Drive 2.0, incorporating full support for automated lane changes on the highway, plus some city-driving functionality, will be ready around 2018. Version 3.0, with full Intelligent Driving (real autonomous) capability such as automatic intersection negotiation and remote piloted park, will be ready around 2020 but contingent on governments allowing such features.

ALSO SEE: New Technology Could Improve Airflow Around Your Tires

In order to minimize the chances of accidents, that means autonomous vehicles have to communicate clearly outward; and it requires making sure that autonomous vehicles drive in “socially acceptable” ways—meaning that they can maintain the flow of traffic, in the absence of painted lane guides, when drivers around them are behaving erratically, or when passengers (or deer) dart across in the street ahead.

They can’t be overly aggressive, unpredictable, or hesitant, as that might result in mishaps, or at the very least a lack of trust in piloted vehicles.

The when and where of very different driving styles

Complicating things even more, social expectations on how drivers should behave will be dramatically different from place to place—and even depend on the time of day.

To get there, Nissan is putting tens of people on task to look at ethnographic factors, and how pedestrians and bicyclists interact with vehicles. And with that, they’re building on some of the cognitive engineering and design-related research of Dr. Don Norman.

DON'T MISS: Mazda’s New Rotary To Arrive With Turbocharging, Not Electrification: Report

Nissan has a team of researchers, headed by Martin Sierhuis, a former NASA research scientist, working on that task out of its Silicon Valley office.

Applying some of those ethnographic factors, combined with physical cues—like eye contact, waving, and cranking the tire angle to the side at a stop—would give the piloted vehicle a far better understanding of what a human pedestrian (or other driver) is likely to do next.

Part of it’s simply being polite, rather than always following the exact traffic rule in a particular situation, and that’s a complex artificial-intelligence task. There are certain situations in city driving in which it’s rude not to let a pedestrian cross if you’re already coming to a stop, for example, while in other cases you're interrupting precious traffic flow.

Intention broadcasting: Like a bus rollsign for cars

To get the word across regarding what the autonomous vehicle is going to do next, Nissan is looking at ways in which it can boldly do “intention broadcasting” from its autonomous cars.

In the IDS Concept from last week’s Tokyo Motor Show, an “interaction indicator”—a high beltline of light starting at the grille and headlights and beside the vehicle—changes color from blue to green, yellow, orange, and red, depending on the alert level at that moment.

Meanwhile, there’s a large text readout at the base of the windshield, facing outward, that tells those in front of the vehicle what the intent is, such as “after you.”

Be sure to watch the video back at the top of this piece for how it all works, and hope that autonomous cars, when they arrive, will be this communicative.

_______________________________________