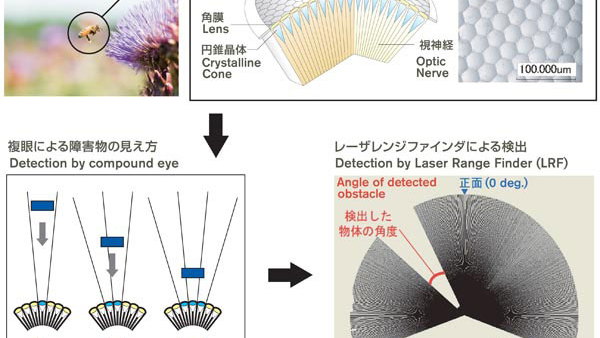

Compound eyes are unsettling to look into, but looking out of them gives bees the ability to see nearly 300 degrees around their perimeter. Scanning such a wide area for obstacles would at first seem difficult, but the nature of the compound eye - which divides the bee's sight into numerous tiny segments, and then composites them back together - makes location detection part of the job of seeing, lightening the load on the bee's tiny brain.

Likewise, the Nissan system, which replaces compound eyes with a laser scanning system, incorporates location detection directly into the process of 'seeing' the world around the car. This means the system doesn't require intricate CPUs, and instead operates on simple microprocessors.

"The biggest difference to any current system is that the avoidance maneuver is totally instinctive. If that was not so, then the car robot would not be able to react fast enough to avoid obstacles," said Toshiyuki Andou, manager of Nissan's Mobility Laboratory.

"It must react instinctively and instantly because this technology corresponds to the most vulnerable and inner-most layer of our Safety Shield, a layer in which a crash is currently considered unavoidable," he added. "The whole process must mirror what a bee does to avoid other bees. It must happen within the blink of an eye."

The concept technology is already being tested in a robotic microcar known as BR23C. This very un-car-like car more closely resembles a stylized humanoid, but features the adapted collision avoidance system as its primary function. Like a car, which can only react to its environment in the two-dimensional world of pavement, the BR23C detects anything within a two-meter (6.6ft) sphere of 'personal space' and employs an algorithm that can slow, accelerate, or rotate to avoid a collision.

So far the bio-mimetic technology is only at the concept stage, but the team behind the project has hopes that it could see real-world use to reduce or even prevent future crashes.